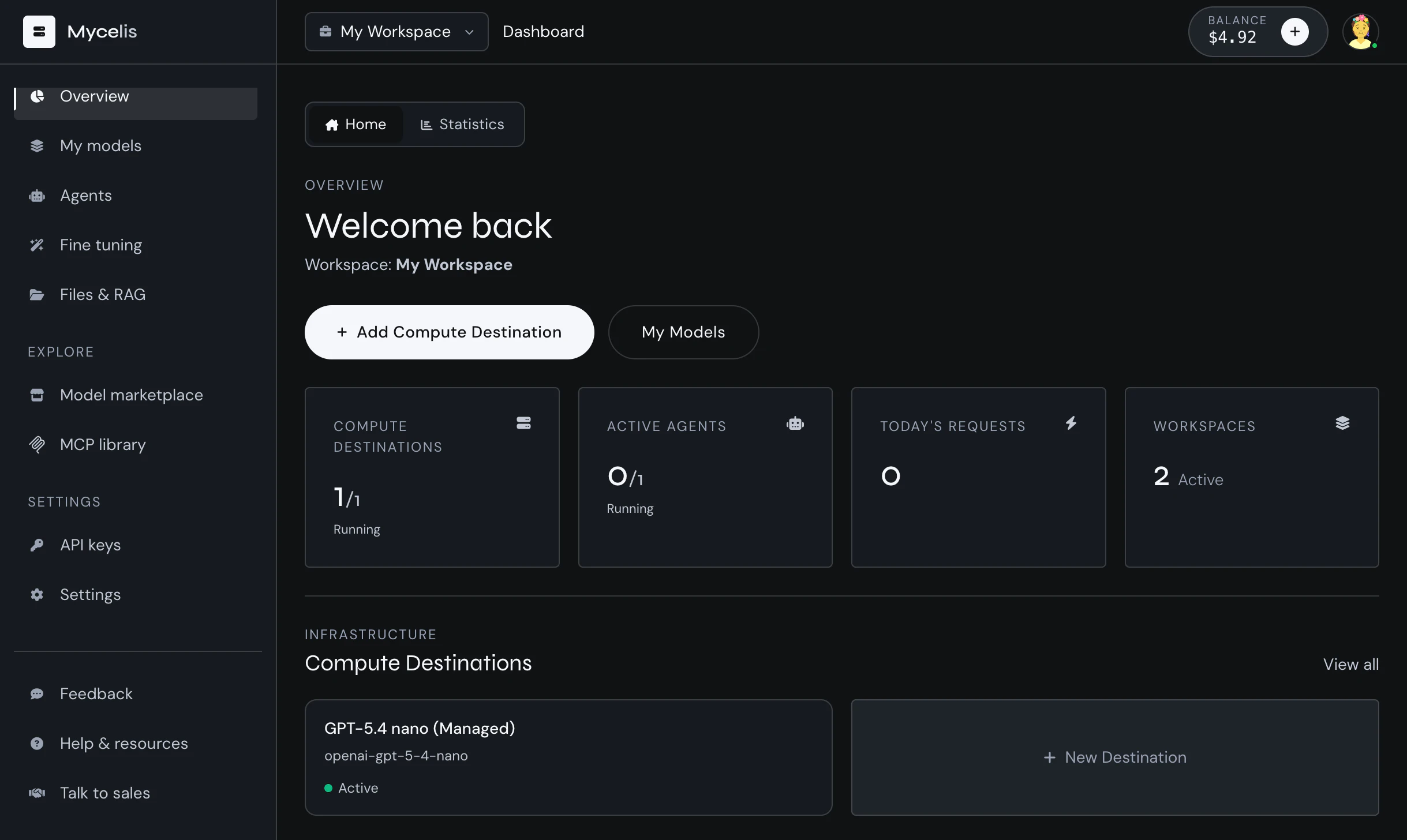

Mycelis

Serverless AI workspace with smart routing & MCP agents

Mycelis abstracts hardware completely. Instantly spin up open-source models on optimized GPUs, or bring your own keys (BYOK). It acts as a unified OpenAI-compatible API gateway for coding clients like OpenCode, desktop apps, or scripts. Includes conditional smart routing, a vector-powered semantic cache to slash costs, integrated RAG, and native MCP agents (GitHub, Slack, Discord, etc.). Get full control over your AI environment with zero server maintenance.

AI Analysis

Mycelis is a serverless AI workspace that fully abstracts hardware infrastructure. Core features include instant deployment of open-source models on optimized GPUs, BYOK support, an OpenAI-compatible API gateway for clients like coding tools and scripts, conditional smart routing, vector-powered semantic cache for cost reduction, integrated RAG, and native MCP agents for seamless integrations with GitHub, Slack, Discord and more. It solves key user pain points such as complex server maintenance, unpredictable AI inference costs, fragmented toolchains, and lack of control over AI environments. The value proposition is delivering a unified, zero-maintenance platform for efficient, cost-effective, and fully customizable AI development and deployment.

In 2025-2026, with exploding AI adoption, maturing open-source LLMs, rising demand for cost-optimized inference amid economic pressures, and the trend toward serverless architectures, timing is highly favorable. User needs for simplified AI ops and integrated agent workflows align perfectly with advancing GPU cloud tech and API standardization. Excellent Timing.

Technical difficulty is moderate as it builds on existing cloud GPU providers, open-source models, and established API frameworks. Development costs for routing, cache, and agents are significant but manageable. Operational GPU costs are high but offset by usage-based pricing. Strong scalability potential with low supply chain risks and standard compliance (data privacy). High feasibility supported by mature underlying AI/cloud infrastructure.

Main target segments: AI/ML developers, software engineers, indie hackers, startups, and mid-sized tech companies building AI apps. Industries: software development, tech services. Geographic: global with concentration in US, Europe, China. TAM for AI dev/inference tools exceeds $100B, SAM for serverless API gateways ~$5-10B, SOM in hundreds of millions. Core pains: infra management overhead, high/variable costs, integration complexity. High willingness to pay for cost savings and time efficiency.

Medium. Direct competitors: 1. OpenRouter (openrouter.ai), 2. Together AI (together.ai), 3. Groq (groq.com), 4. LiteLLM (litellm.com), 5. Helicone (helicone.ai). Mycelis advantages: deeper hardware abstraction for open-source, unique vector semantic cache, native MCP agents, and integrated RAG in one workspace. Disadvantages: likely newer with smaller ecosystem/brand recognition than established players; pricing transparency and performance at scale need validation versus specialized fast-inference providers like Groq.

Upgrade Pro to unlock full AI analysis

Similar Products

Graphbit PRFlow - AI Code Review Agent

AI code reviewer that catches what others miss

▲ 175 votes

Jotform Claude App

Build, edit, and analyze forms directly in Claude

▲ 157 votes

Polygram

AI-native design and coding app to build mobile & web apps

▲ 81 votes

Agent-Sin

AI agent that handles repeated tasks through reusable skills

▲ 78 votes

Mantel

Stop confusing your Claude Code sessions & terminal windows

▲ 72 votes

Stagent

Drive Claude Code through long tasks it would otherwise drop

▲ 58 votes