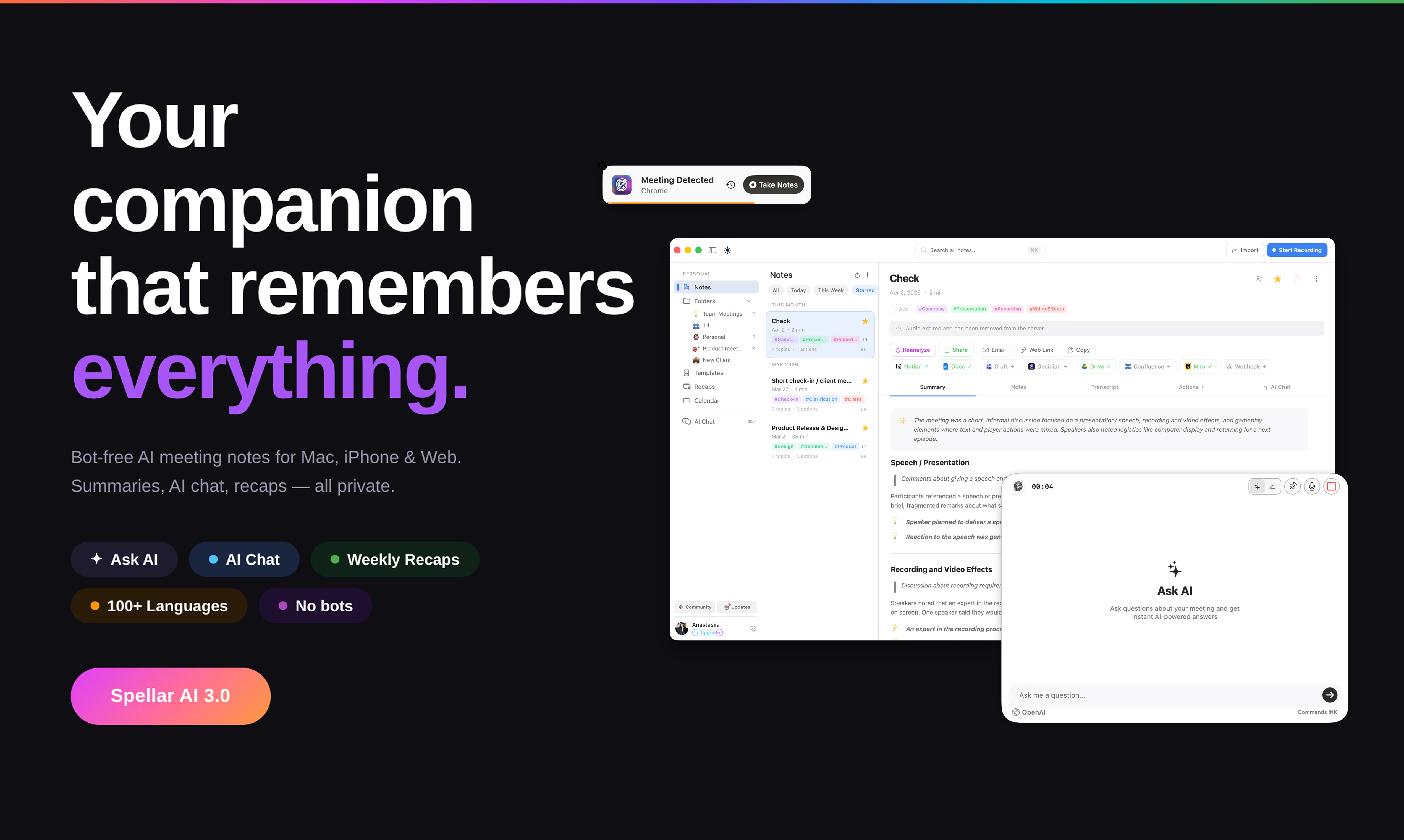

Spellar 3.0

AI Meeting companion with cross-meeting memory

Most meeting tools give you notes. Spellar AI gives you memory. It joins your calls, captures every word, and builds context across all your meetings. Ask what a client said three calls ago. Find decisions from last week. See what’s still open. Organize by client, use templates, and choose the AI you trust — OpenAI, Anthropic, Perplexity, Gemini and more!

AI Analysis

Spellar 3.0 is an AI meeting companion that builds persistent cross-meeting memory instead of one-off notes. It auto-joins calls, transcribes conversations, and creates searchable context across meetings so users can query past client statements, track decisions, open items, and progress. Key features include client/project organization, customizable templates, and choice of LLMs (OpenAI, Anthropic, Perplexity, Gemini). It solves fragmentation of meeting insights and loss of historical context in recurring interactions. Value proposition: transforms scattered calls into an intelligent, queryable organizational memory to boost productivity and decision quality.

In 2025-2026, LLM context windows, retrieval-augmented generation (RAG), and multimodal AI have matured sufficiently to enable reliable long-term meeting memory. Hybrid work and rising volume of online meetings continue to increase demand for smarter knowledge capture beyond basic transcription. Economic pressure on productivity and AI adoption policies favor such tools. Excellent Timing.

Technically feasible using mature speech-to-text, vector databases for memory, and LLM APIs. Main challenges are accurate cross-meeting context linking, data privacy/compliance (recording consent, GDPR), and managing variable API costs at scale. Operational expense for transcription and LLM calls can be high. Scalability is strong in cloud. High feasibility for teams with AI experience, though compliance and cost control are key risks.

Primary users: sales professionals, consultants, account managers, executives, and project leads who hold recurring client or internal meetings. Industries: B2B tech, consulting, professional services, finance. Mainly English-speaking markets (US, Europe). TAM for AI meeting assistants exceeds $5B; SAM for memory/context-focused tools ~$500M-$1B; SOM for Spellar initially $20-60M. Core pain points: wasted time retrieving past meeting details and loss of context causing repeated questions or missed follow-ups. High willingness to pay ($20-80/user/month) for proven productivity gains.

Competition level: Medium. Direct competitors: 1. Otter.ai (otter.ai), 2. Fireflies.ai (fireflies.ai), 3. MeetGeek (meetgeek.ai), 4. tl;dv (tldv.io), 5. Grain (grain.com). Spellar's advantages: explicit cross-meeting memory and queryability, client-centric organization, and user choice of multiple LLMs. Disadvantages: newer entrant with likely fewer native integrations and less brand recognition than Otter or Fireflies; potentially higher per-meeting AI costs. Differentiation via 'memory' focus is meaningful but the AI meeting intelligence space is becoming crowded.

Upgrade Pro to unlock full AI analysis

Similar Products

FileFlan

Instant private universal file sharing

▲ 100 votes

Whiteout

Auto-redact sensitive info from Mac screenshots

▲ 83 votes

Polygram

AI-native design and coding app to build mobile & web apps

▲ 81 votes

Arkiv

Modern Asset Protection for Designers

▲ 68 votes

Tweetmonials

Turn X praise into testimonials and trust signals

▲ 67 votes

Stagent

Drive Claude Code through long tasks it would otherwise drop

▲ 58 votes