Tracea

Datadog for AI agents - traces, RCA, and team memory

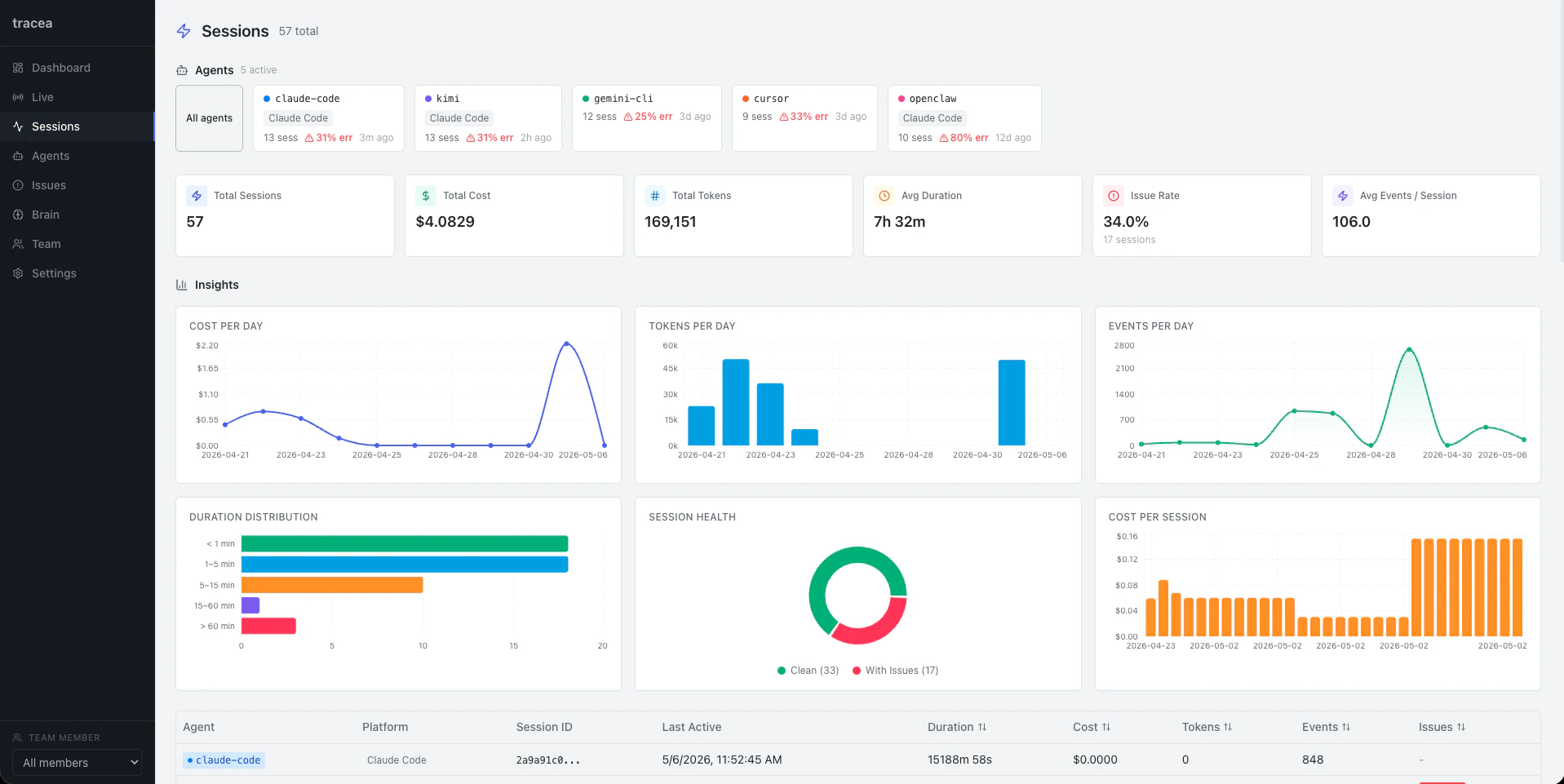

Agents fail silently. You fire one off, it runs, nothing comes back - no trace, no cost data, no idea which call broke. Tracea captures every tool call, LLM response, and cost spike. Automatic RCA tells you exactly why it failed. YAML detection rules catch loops, spikes, and silent errors before they hit production. Self-hosted. One Docker command. No data leaves your network. Company Brain turns every session into team memory - agents start smarter each run.

AI Analysis

Tracea is a self-hosted observability tool for AI agents, positioned as 'Datadog for AI agents'. Core features include capturing every tool call, LLM response, and cost spike; automatic root cause analysis (RCA) for failures; YAML detection rules to catch loops, spikes, and silent errors pre-production; and 'Company Brain' that converts sessions into shared team memory for smarter subsequent runs. It solves key pain points like silent agent failures, lack of visibility into operations/costs, debugging difficulties, and loss of organizational knowledge. Deployed via one Docker command with no data leaving the network, its value proposition is privacy-first, proactive monitoring and continuous improvement for reliable AI agent development.

In 2025-2026, AI agent adoption is accelerating rapidly with maturing LLM tech and rising enterprise demand for reliable autonomous systems. Privacy regulations and concerns over cloud data sharing favor self-hosted solutions. User needs for observability and RCA are growing as agents move to production. This aligns perfectly with industry trends toward AI reliability and internal toolchains. Excellent Timing.

High. Technical difficulty is manageable using established tracing, LLM integration, and Docker technologies. Development and operation costs are low due to self-hosted open-source model with one-command deploy. Minimal supply chain or compliance risks as data stays on-prem. Strong scalability potential for team memory via knowledge bases. Fits well for a YC developer tools team.

Main target users: AI/ML engineers, developers building autonomous agents, and technical teams in startups/enterprises (demographics: tech professionals aged 25-45). Industries: AI development, software/SaaS. Geographic: Global with focus on US and Europe (YC-aligned). Estimated market size: Rapidly expanding AI observability sector (large TAM within multi-billion devtools market; SAM for agent-specific tools; SOM for self-hosted/open-source segment). Core pain points: silent failures, no visibility/RCA, repeated errors, knowledge loss. High willingness to pay for time-saving, privacy-focused reliability tools.

Medium. Direct competitors: LangSmith (smith.langchain.com), Helicone (helicone.ai), Arize Phoenix (phoenix.arize.com), AgentOps (agentops.ai), Traceloop (traceloop.com). Advantages vs competitors: fully self-hosted with strict data privacy (no network egress), unique YAML proactive rules, and Company Brain for team memory/knowledge retention. Disadvantages: newer entrant may have fewer pre-built LLM integrations or ecosystem support initially; open-source model could limit enterprise sales velocity compared to VC-backed cloud competitors.

Upgrade Pro to unlock full AI analysis

Similar Products

Graphbit PRFlow - AI Code Review Agent

AI code reviewer that catches what others miss

▲ 175 votes

Agent-Sin

AI agent that handles repeated tasks through reusable skills

▲ 78 votes

Staff.rip

Describe a code change in plain language and ship it

▲ 75 votes

Termux Lite

A powerful SSH terminal with FTP & SFTP support

▲ 75 votes

Mantel

Stop confusing your Claude Code sessions & terminal windows

▲ 72 votes

Stagent

Drive Claude Code through long tasks it would otherwise drop

▲ 58 votes